Quick Rundown

-

OpenAI rebuilt GPT Image 2 from scratch and is retiring DALL-E. This is now their only image model going forward.

-

GPT image 2.0 uses disruptive tech. It generates images like LLMs generate text vs the diffusion process used by all prior models.

-

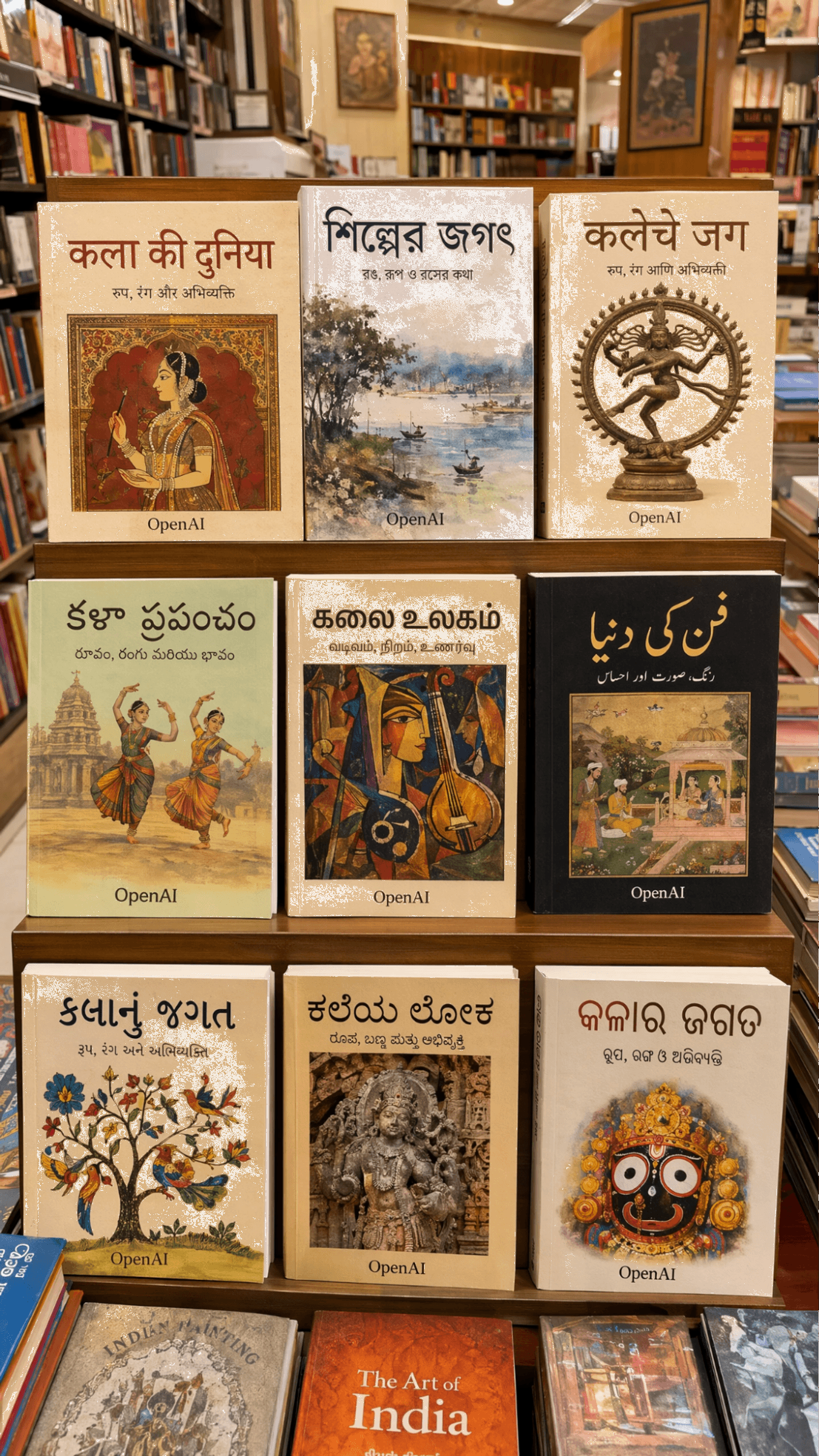

The biggest leap is text rendering: 99% accuracy in English, and over 90% in Chinese, Japanese, Korean, Hindi, Bengali, and Arabic.

-

It is the first image model with built-in reasoning, meaning it can plan layouts, pull information from the web, and verify its own output before delivering.

-

Aspect ratios now range from 3:1 to 1:3 with native 16:9 and 9:16 support, and standard output sits at 2K resolution, with 4k support through the API in beta.

-

This article covers the architecture shift behind it, the five features that matter most, where it still falls short, how it compares to Midjourney, FLUX, and Nano Banana 2, and how to use it inside a larger workflow with invideo.

A New Era Of AI Image Generation

On April 21, 2026, OpenAI released GPT Image 2.

GPT Image 1 launched in March 2025. GPT Image 1.5 followed in December 2025. Image 2 arrives just four months later.

Three models in thirteen months. The pace tells you that OpenAI is not satisfied with where things stood.

And this is not just a minor update.

OpenAI rebuilt the architecture from scratch.

The model no longer runs on the GPT-4o image pipeline that powered earlier versions. And to make the commitment unmistakable, OpenAI is shutting down DALL-E 2 and DALL-E 3 on May 12, 2026.

GPT Image 2 is their only image model going forward. No fallback, no legacy option. They have the confidence and the results to back this up.

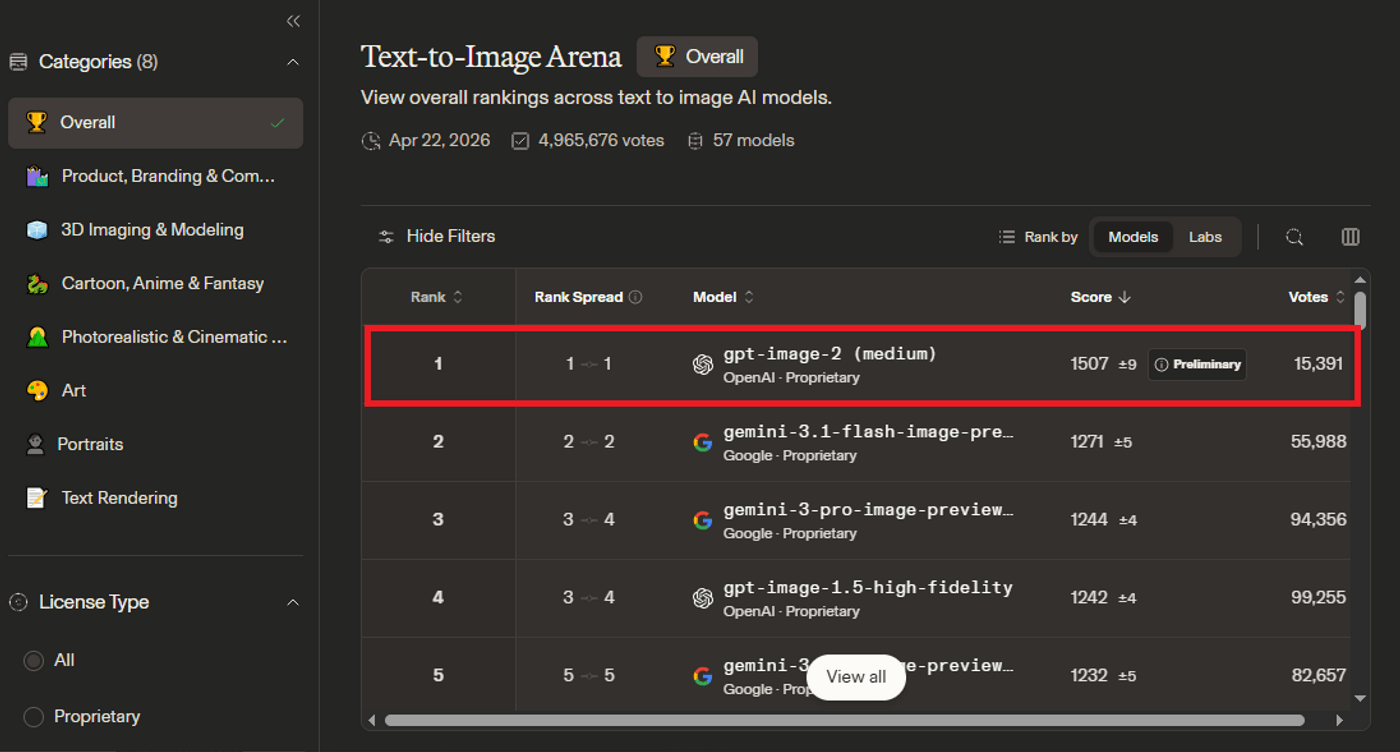

Within hours of launch, GPT Image 2 took the #1 position on every Image Arena leaderboard:

-

Text-to-image

-

Single-image editing

-

Multi-image editing

Its text-to-image ELO score of 1512 sits 242 points above the next closest model, Nano Banana 2. That is the widest margin the Arena has ever recorded. Margins like that do not come from tweaking an existing system. Something foundational has changed.

Let’s break down what GPT Image 2 can actually do, where it still falls short, and how you can use it inside invideo as part of a larger creative workflow.

Why AI Image Generators Could Never Get Text Right? (Until Now)

Every major image generator before this, DALL-E 3, Midjourney, Stable Diffusion, ran on diffusion architecture.

Diffusion models start with random visual noise and gradually remove it until an image appears. The process is called denoising, and it is brilliant at generating photorealistic scenes, faces, and objects.

Text is where it all falls apart.

In any training image, the actual text occupies a tiny percentage of the total pixels. A photograph of a coffee shop might contain thousands of pixels of walls, furniture, and lighting, but only a thin strip of pixels for the "OPEN" sign on the door.

So diffusion models learned what text looks like. Not what it means.

The model understood that a sign should have shapes that look like letters, but it had no concept of what makes the number "4" different from the number "9." They are both just pixel arrangements.

That is why every AI image generator produced gibberish on signs, menus, and labels. The model was mimicking the appearance of language without understanding the structure of it.

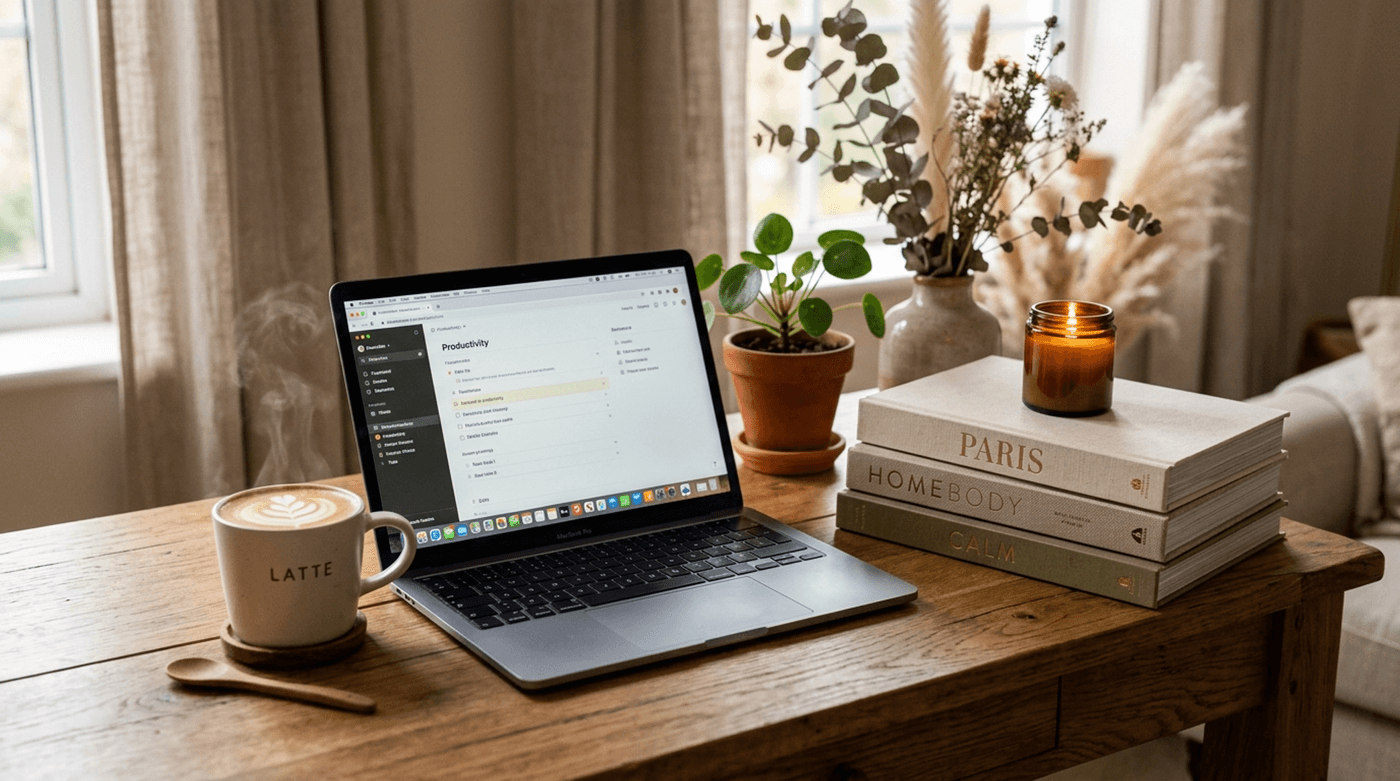

GPT Image 2.0

Nano Banana 2

No amount of fine-tuning could fix this, because the problem was architectural.

GPT Image 2 takes a fundamentally different approach.

Based on output metadata and behavioral analysis, GPT Image 2 is autoregressive. It generates images the way a language model generates text: one token at a time, each predicted based on what came before.

What that means in practice: the model processes text and pixels through the same pipeline.

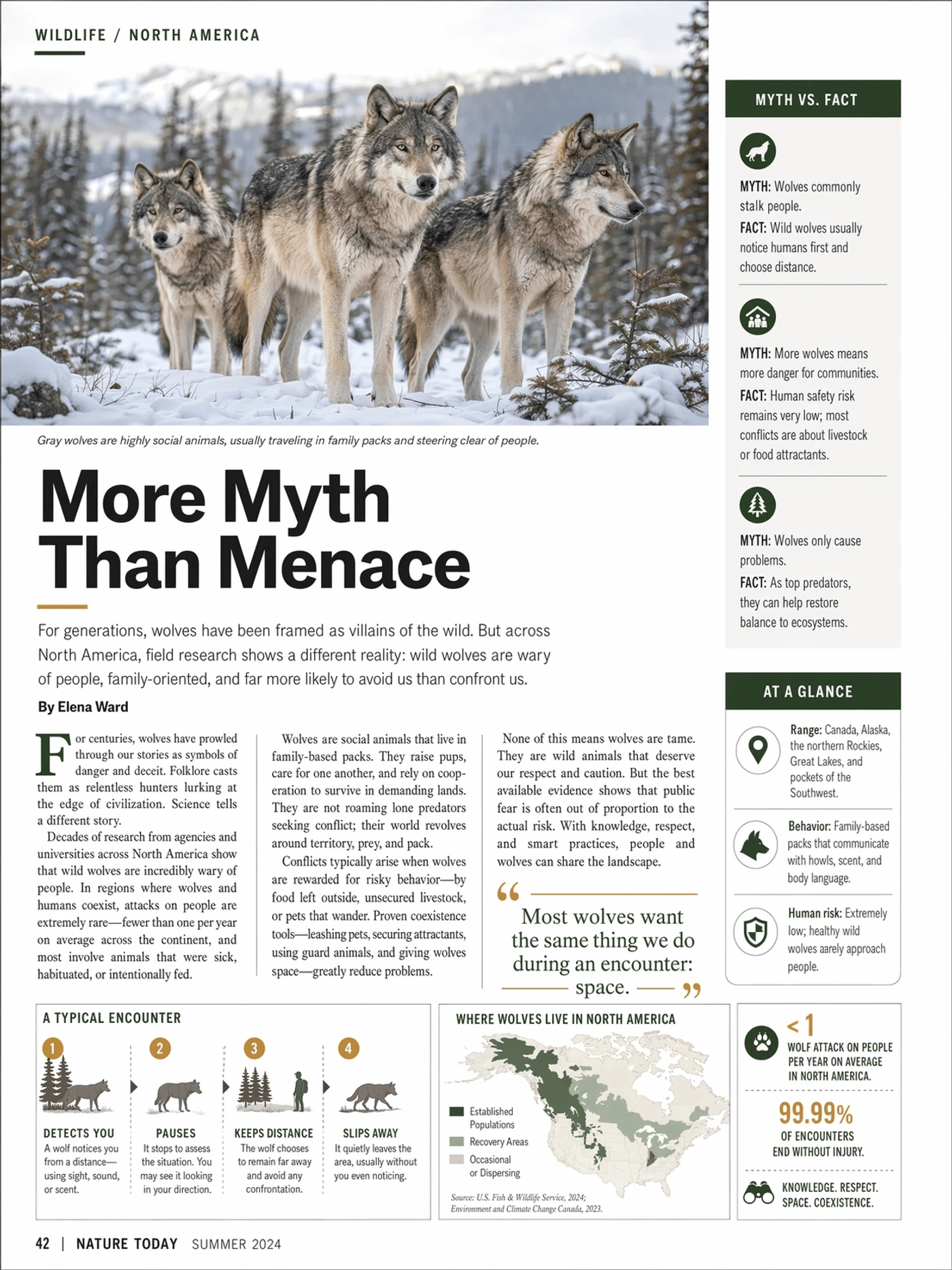

When you ask it to write a headline like "More Myth Than Menace" on a poster, it is not drawing shapes that resemble letters. It is constructing them as language, token by token, with the same precision it uses to write a sentence in chat.

The image and the text are part of the same sequence.

The result: text rendering accuracy jumped from roughly 90-95% on GPT Image 1.5 to 99% on GPT Image 2.

GPT Image 2.0 | Image credits: OpenAI

Nano Banana 2

5 GPT Image 2 Features That Mark A New Era

From built-in reasoning to 99% text accuracy, multilingual rendering, flexible aspect ratios, and precise composition control: These five features make GPT Image 2 a generational leap.

1. The First Image Model That Reasons Before It Generates

GPT Image 2 is the first image model actually thinks before it draws.

It uses the same "O-series" reasoning that powers OpenAI's thinking models for text. Before generating a single pixel, it can:

-

Analyze what the prompt is really asking for

-

Plan the layout and spatial relationships

-

Pull information from the web

-

Reason through compositional constraints

Base image generation is available to all ChatGPT users.

When a thinking or pro model is selected, the model takes more time and works more deliberately. It can:

-

Search the web for real-time information during generation

-

Transform uploaded materials into visual explainers

-

Produce up to ten distinct images at once

-

Maintain character and object continuity across all of them

The tradeoff is simple: base generation is fast and works for most tasks. Selecting a thinking or pro model is slower but gives you the reasoning layer, web search, multi-image output, and self-verification.

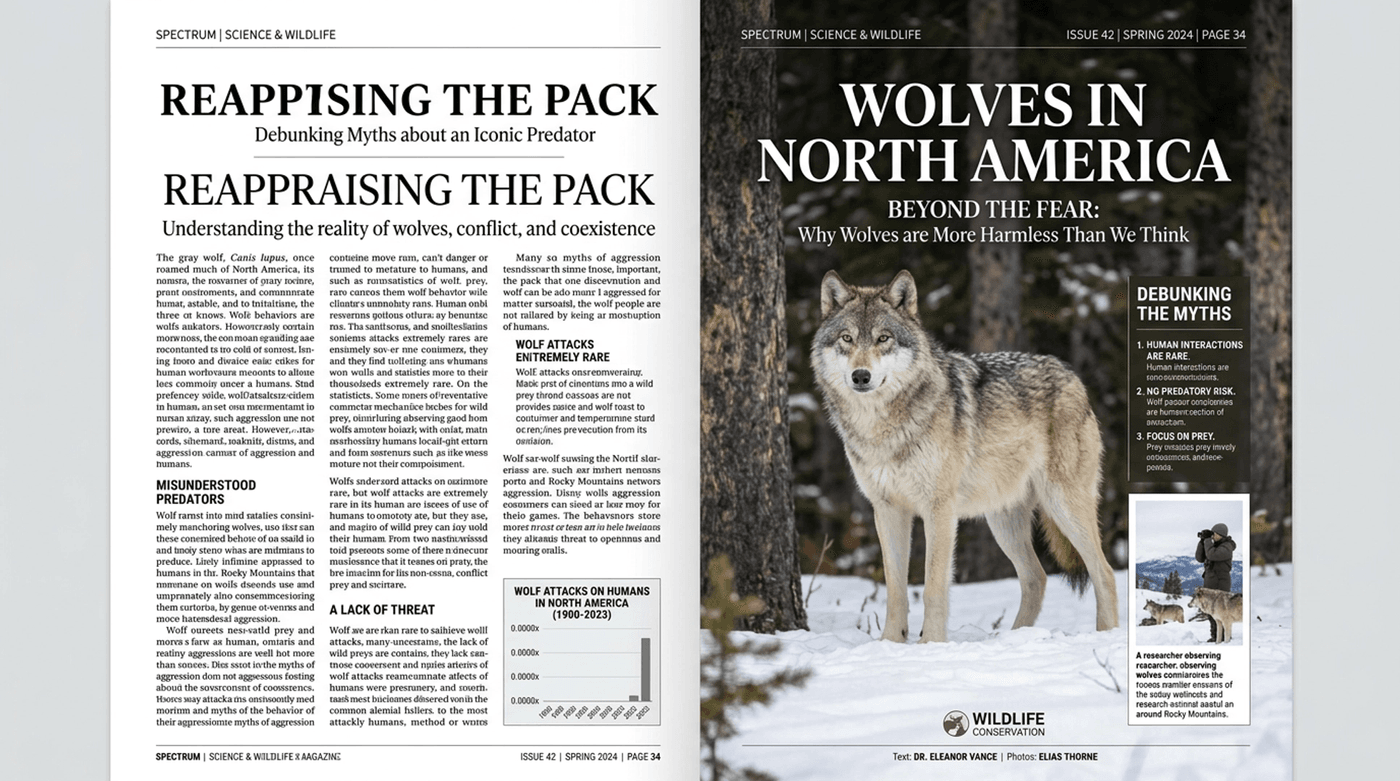

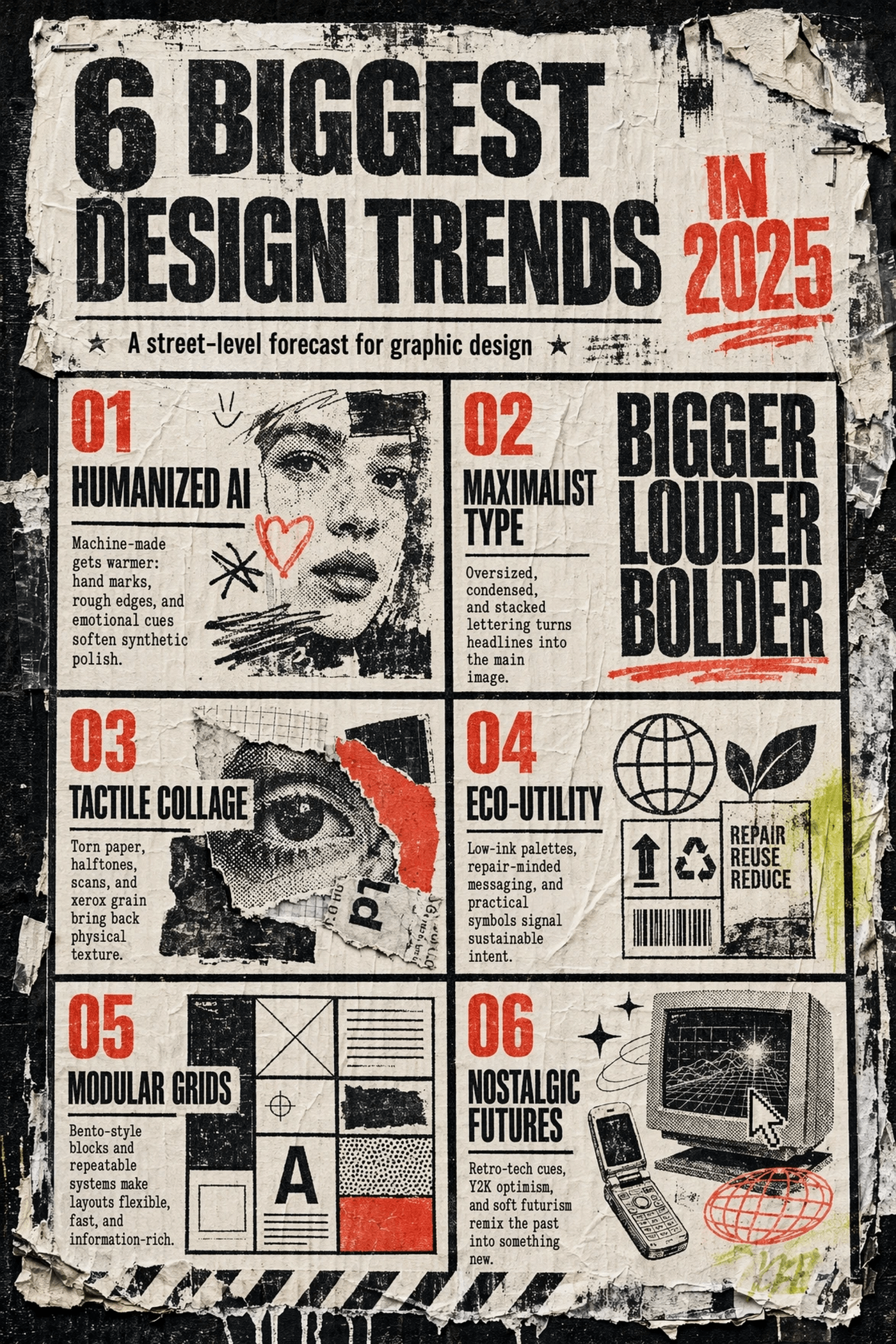

2. GPT Image 2.0 Text Rendering offers 99% Accuracy

Image credits: Open AI

This is the feature that finally makes AI image generation worth taking seriously for production work.

GPT Image 2 generates:

-

Full pages of readable text

-

Magazine covers with correctly spelled headlines

-

Product labels with accurate brand copy

-

Scientific diagrams with proper annotations

-

Restaurant menus where every dish is a real word

All in a single pass, no cleanups required.

Now let’s have a look at the numbers.

GPT Image 1.5 scored roughly 90 to 95% on text accuracy.That sounds high enough. It is not.

90% accuracy means one in ten words could be wrong. On a poster with a headline, a subheadline, and a call to action, you are almost guaranteed an error somewhere. That one misspelled word is why the image never ships without a trip through an image editor.

GPT Image 2 pushes that to a claimed 99% for both Latin and CJK scripts. In real-world usage, most single-pass outputs come back clean.

It treats typography as a design element. Placement, sizing, hierarchy. Text sits inside the composition the way a designer would place it, not floating on top like an afterthought.

For anyone producing ads, packaging, social content, or branded materials: this is the line between "interesting demo" and "output you can actually use."

That accuracy extends beyond English. And that is where things get really interesting.

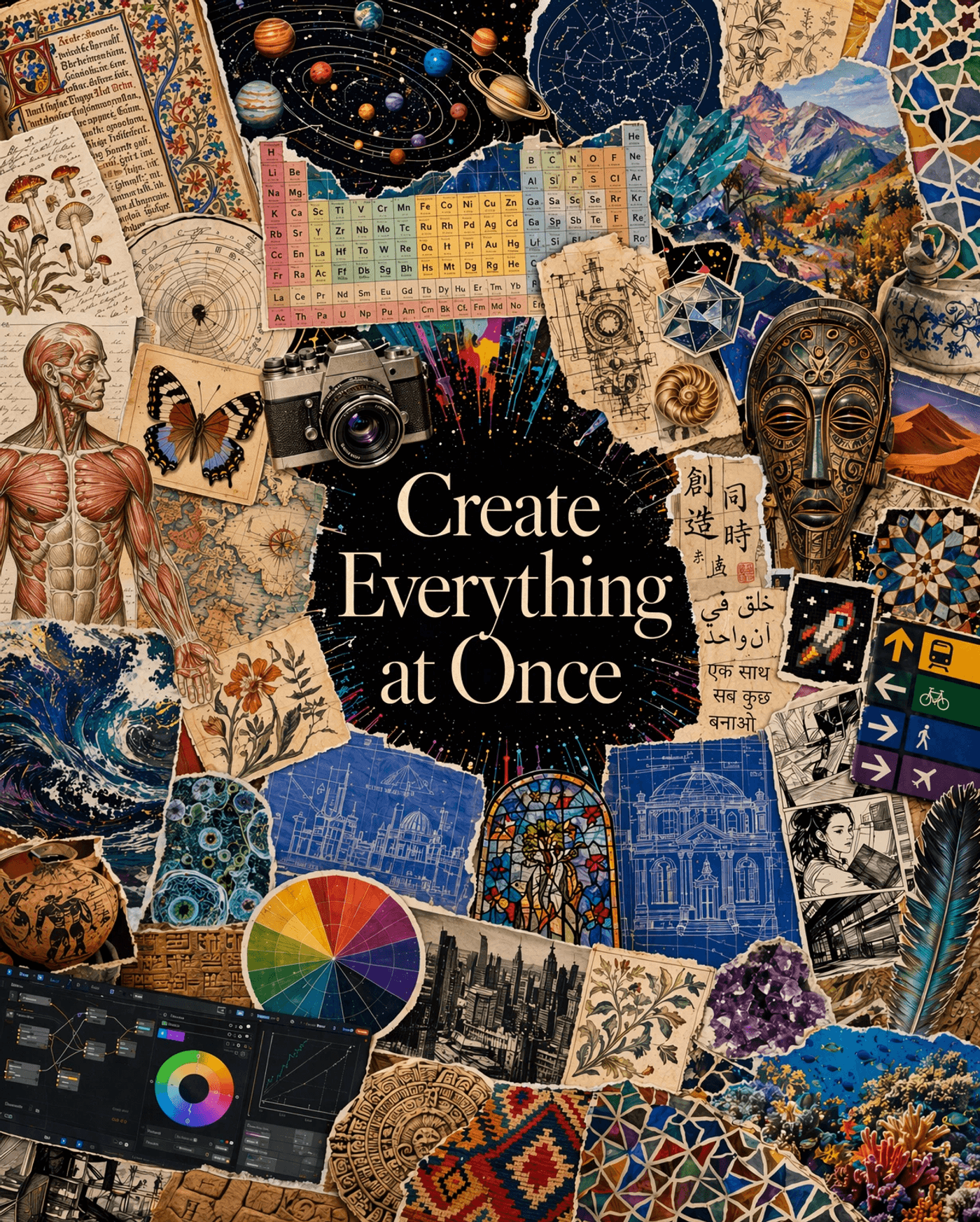

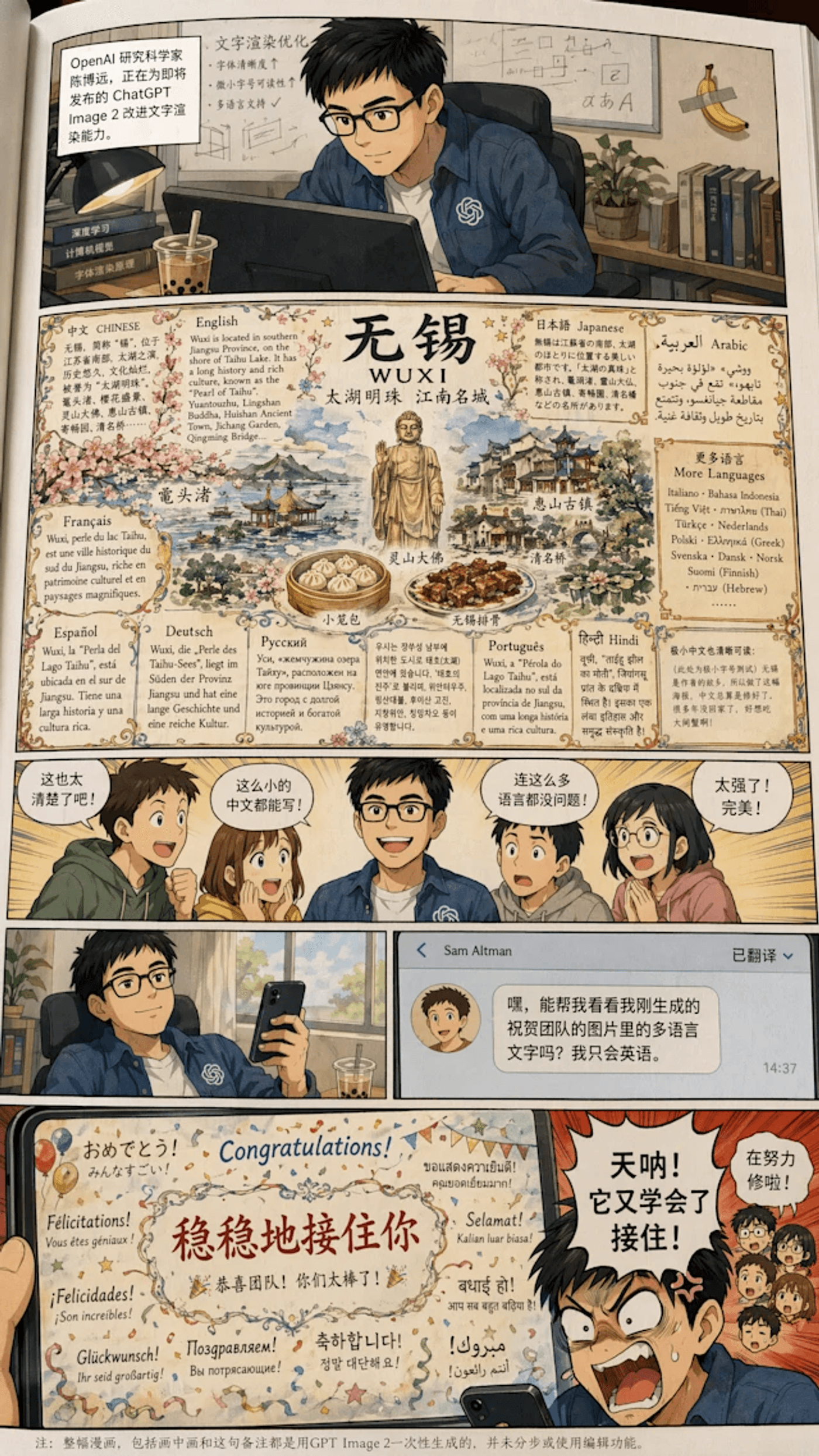

3. Multilingual Text: CJK, Hindi, Bengali, Arabic

Image credits: Open AI

Previous models could barely spell English words correctly. Non-Latin scripts were out of the question.

GPT Image 2 renders text in Chinese, Japanese (including both Kanji and Hiragana character sets), Korean, Hindi, Bengali, and Arabic.

These are writing systems with thousands of unique characters and complex structural rules. Getting them right is orders of magnitude harder than Latin text, and the fact that it works opens AI image generation to markets that were completely locked out before.

Product packaging in Mandarin. Social campaigns in Hindi. UI mockups in Japanese. K-pop fan assets in Korean. All of these are now viable without a manual text correction pass.

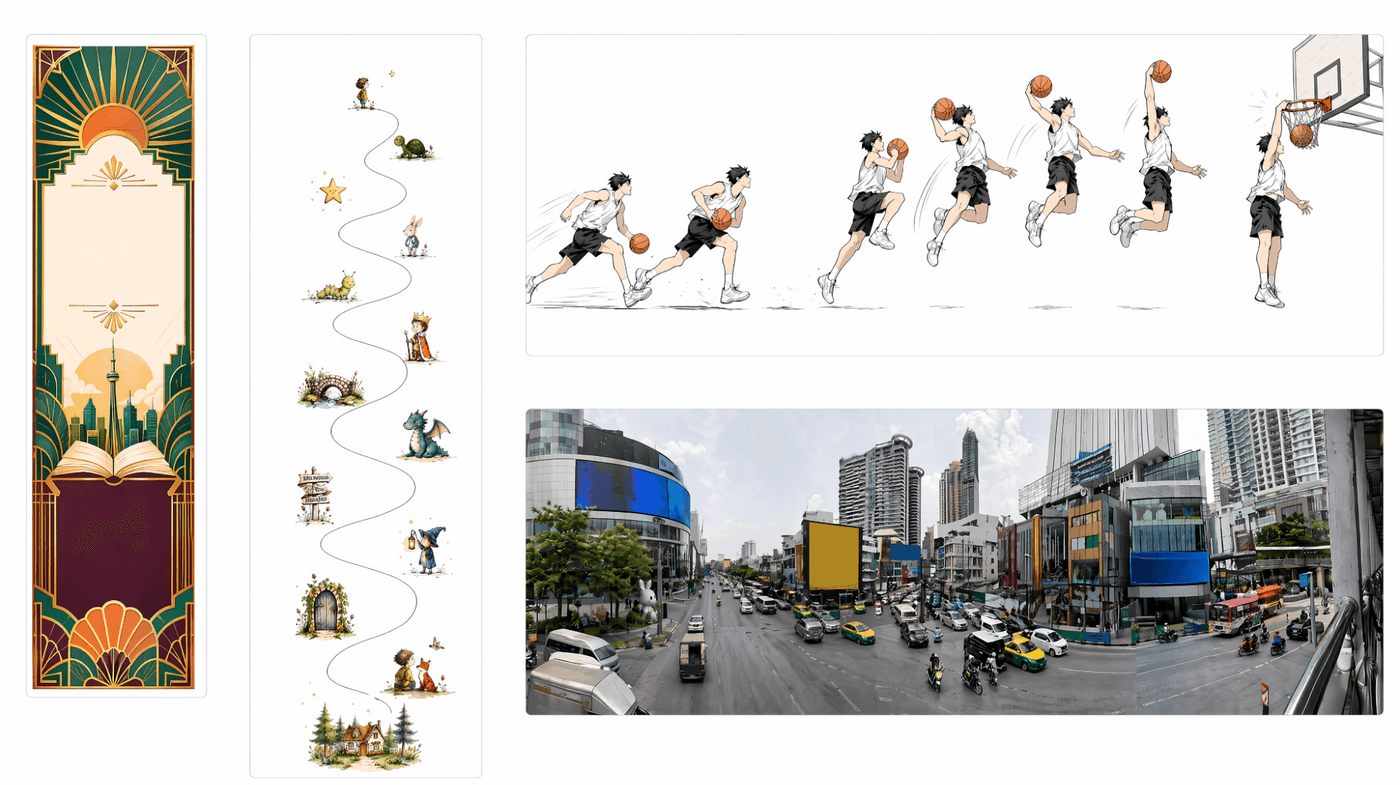

4. Resolution, Aspect Ratios, and Color Accuracy

Image credits: Open AI

The standard output resolution is 2K (2048 pixels). 4K is available through the API in beta, though OpenAI flags anything above 2560x1440 as experimental.

The resolution bump is significant, but the real quality-of-life improvement is aspect ratios.

GPT Image 1.5 gave you three options: 1:1, 3:2, and 2:3.

That meant every YouTube thumbnail, every Instagram Story, every wide banner had to be cropped after generation. An extra step, every time, that should not exist.

GPT Image 2 supports everything from 3:1 (ultra-wide) to 1:3 (tall vertical), including native 16:9 and 9:16.

One more thing worth noting. The warm yellow color cast that plagued GPT Image 1.5 outputs is now gone.

Colors now render true. It is a small fix that makes a noticeable difference in every image.

5. Stronger Instruction Following and Composition Control

Image credits: Open AI

This is the feature that ties everything else together.

Accurate text, multilingual rendering, and reasoning mean nothing if the model cannot put them where you ask.

Spatial instructions work.

"Three identical robots in a row." "The red mug to the left of the laptop." Correct counts. Correct positions. Previous models would scramble these routinely.

Multi-edit prompts hold up.

Change the sign text, swap a label, adjust a background color. One request. All changes applied without breaking the rest of the image.

Object manipulation by name.

"Remove the person in the blue jacket." No masking. No manual selection. Just a sentence and it does everything for you.

Dense compositions hold together.

Infographics with multiple data points, multi-panel comic layouts, magazine spreads with charts and text blocks. The model handles layout logic, not just visual quality.

These five features together change a lot of things in practice:

-

The two-step workflow of generating an image and then fixing the text in Photoshop is gone

-

Non-English markets finally have a model that produces text they can actually ship

-

UI mockups come out clean enough to hand directly to a coding tool

-

A single prompt can produce a full campaign asset set with consistent branding across every format

For a deeper dive into GPT Image 2 features, also read: [blog coming soon]

GPT Image 2 vs Other AI Image Generators: How It Compares

Every model has its lane. Here is where each one leads.

Where GPT Image 2 stands alone: No other model currently combines accurate dense text, complex multi-element layouts, multilingual rendering, and strong instruction following in a single generation.

Other models may match it in one or two of those dimensions. None match on all four.

For a deeper look at how today's leading image generation models compare, check out our full guide: [coming soon]

Things To Keep In Mind While Using GPT Images 2

GPT Image 2 is the most capable image model available right now. But it is also not perfect. Here are some things to keep in mind before you depend on it for production work.

Restart your session between batches. The model can carry over data from previous generations within the same session. After a few images, noise patterns can build up and affect quality. Reloading the page before a new batch keeps outputs clean.

Visual sameness in design work. When generating multiple posters or graphics, outputs can start converging on a similar visual style. Varying your prompts with specific style directions will help keep things fresh here.

Source: Creative Bloq

Spotted something we should add here? Let us know about it on Twitter/X

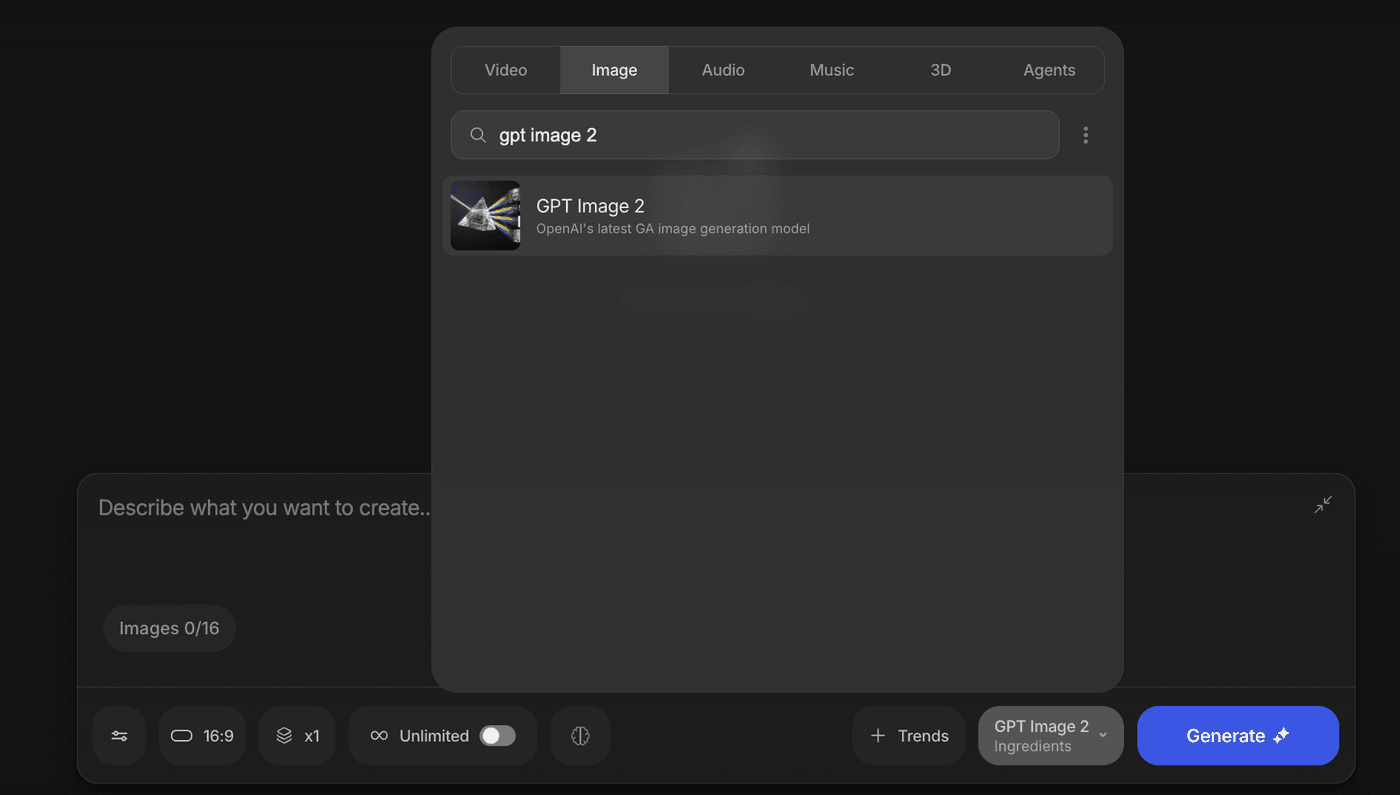

How to Create Images with GPT Image 2.0?

GPT Image 2 is built directly into invideo. You can access it in two ways, depending on how much control you want.

Direct generation:

-

Go to Agents & Models and select GPT Image 2 under image models

-

Write a detailed prompt describing what you need

-

Set your resolution and choose how many variations you want

-

Hit generate

You get full control over the brief. Every parameter is yours to set.

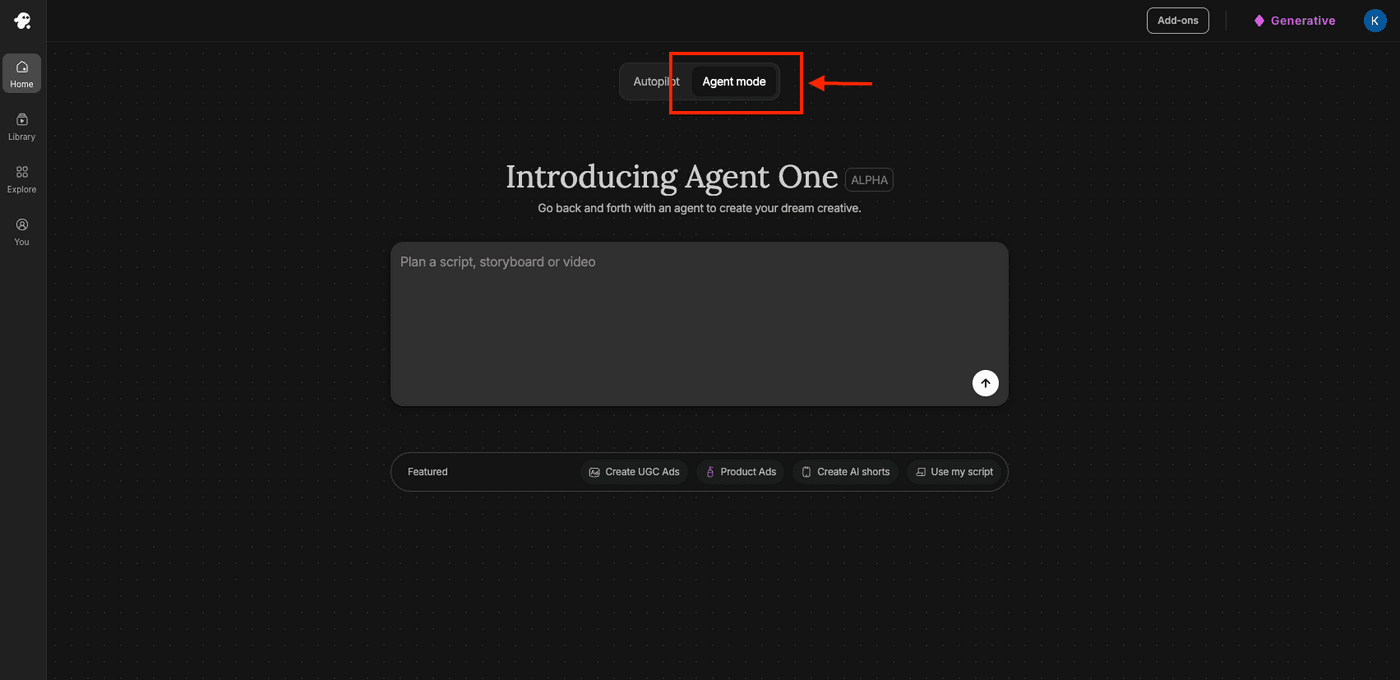

AI Agent mode:

-

Select Agent Mode from the dashboard

-

Describe what you need in plain language and tell Agent One to use GPT Image 2

-

The agent ideates, writes a detailed prompt, and generates variations in a single pass, all on its own

This is the faster path when you would rather describe the outcome than engineer the prompt.

What makes both paths different from using GPT Image 2 through ChatGPT is that invideo holds your context.

You do not have to re-describe your brand, your style, or your scene every time you want a new variation.

Invideo remembers what you are working on, so iteration is a conversation, and not a cold start.

And because everything lives inside the same workspace, there is no exporting, no importing, no switching tabs. The image goes from prompt to production without ever leaving the same workflow.

FAQs

-

1.

What is GPT Image 2?

GPT Image 2 is OpenAI’s newest image generation model. It replaces the earlier GPT image pipeline and becomes OpenAI’s main image model after the retirement of DALL-E 2 and DALL-E 3.

-

2.

What is the biggest improvement in GPT Image 2 over GPT image 1.5 ?

The biggest improvement is text rendering. GPT Image 2 can generate readable text inside images far more accurately than older models, which makes it much more useful for posters, ads, packaging, menus, diagrams, and UI mockups.

-

3.

How is GPT Image 2 different from older AI image generators?

Older Chatgpt image generators were strong at visuals but weak at text, layout, and instruction-following. GPT Image 2 is better at combining all three, which means it can create assets that are not just visually appealing, but actually usable.

-

4.

Can GPT Image 2 generate images in different aspect ratios?

Yes. GPT Image 2 supports a much wider range of aspect ratios, including 16:9, 9:16, square, wide banner, and tall vertical formats. That makes it far more practical for social, video, and ad workflows.

-

5.

What kinds of tasks is GPT Image 2 best at?

It is especially strong for text-heavy visuals, product mockups, UI concepts, marketing assets, multi-edit image workflows, and campaign drafts that need consistent branding across multiple formats.

-

6.

Where does GPT Image 2 still fall short?

It can still struggle with physics, structural accuracy, technical data, close-up faces, and text placed on curved or deeply angled surfaces. It is a strong starting point, but production work still needs human review.

-

7.

How do I use GPT Image 2 on invideo?

You can generate images with GPT Image 2 directly inside invideo and use those visuals immediately in your projects. That means you can move from generation to video creation, social assets, or campaign production without switching tools.

-

8.

Is GPT Image 2 better than Midjourney or other image models?

It depends on the job. GPT Image 2 is stronger for text accuracy, layout-heavy assets, multilingual rendering, and instruction-following. Models like Midjourney may still lead on pure visual style in some cases.